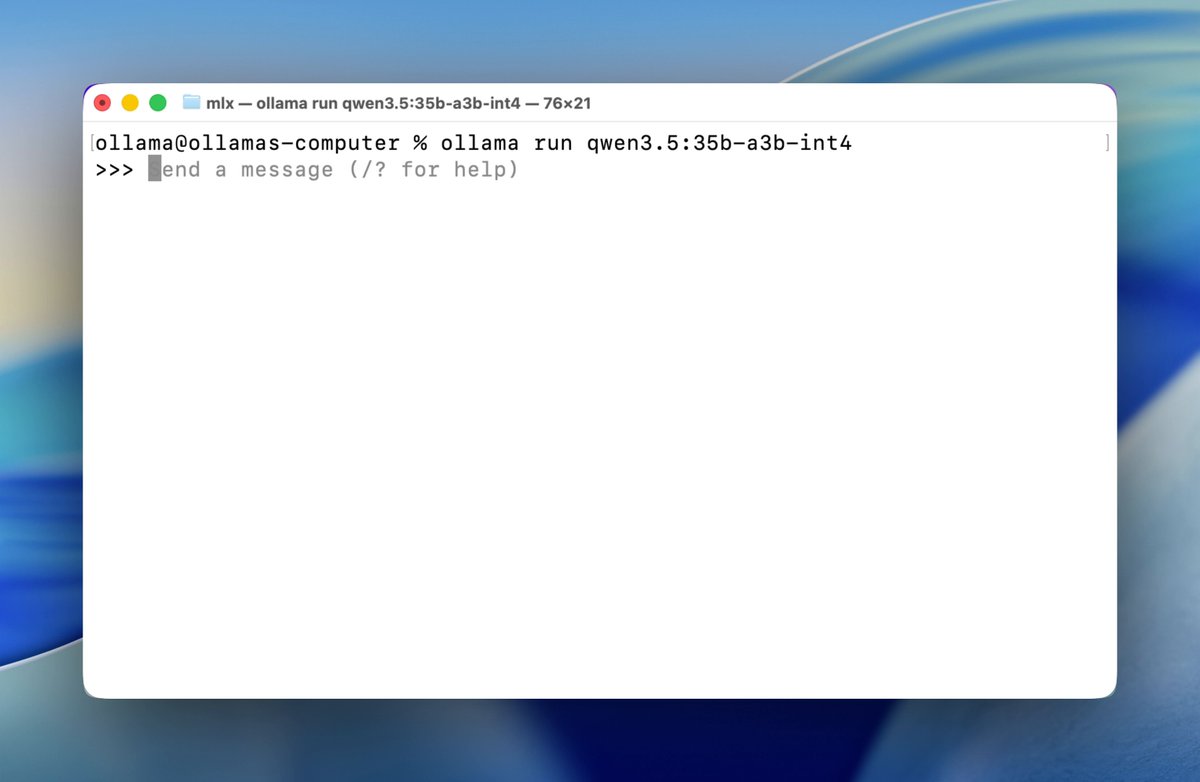

Ollama 集成苹果 MLX 框架以最大化 Apple Silicon 上本地大模型性能,加速 OpenClaw 等代理运行。

部署运维📅 2026/03/31

#部署#开发者#低风险#macOS#手动触发#半自动#代码仓库#性能优化#报告#生产中

Ollama is now updated to run the fastest on Apple silicon, powered by MLX, Apple's machine learning framework. This change unlocks much faster performance to accelerate demanding work on macOS: - Personal assistants like OpenClaw - Coding agents like Claude Code, OpenCode, or Codex