英伟达发布 NemoClaw,这是一个旨在兼容任何芯片架构的硬件无关型 AI 智能体平台。

部署运维📅 2026/03/12

#AI 代理#API#演示#开发者#全自动#GitHub#中风险#事件触发#代码仓库#企业级#开源#报告

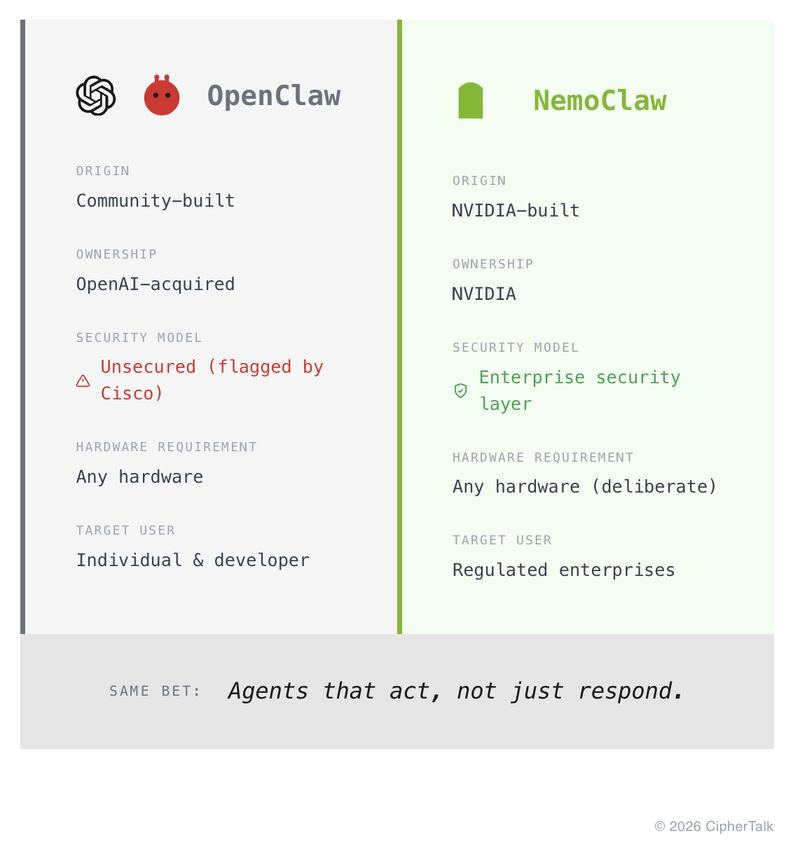

NVIDIA is open-sourcing the one thing that could make its GPUs irrelevant. That is not a provocation. That is the reported design decision buried in yesterday's Wired story about NemoClaw, NVIDIA's upcoming enterprise AI agent platform. The platform is hardware-agnostic: it runs on NVIDIA chips, AMD chips, Intel chips, or CPU-only setups. NVIDIA built it that way on purpose. Some context on why that is strange: NVIDIA's $3 trillion valuation has always rested partly on CUDA, its proprietary software layer that made it painful to leave NVIDIA hardware once you were trained on it. NemoClaw inverts that logic. The agent orchestration layer, which is where a lot of the value in enterprise AI is heading, will work on whatever your data center already runs. The strategic read is that NVIDIA is copying Meta's Llama playbook: open-source the layer that matters to get it adopted everywhere, then trust that accelerating enterprise AI workloads will drive GPU demand anyway. Whether NemoClaw becomes the standard or quietly fades into GitHub history depends on execution details we do not yet know, specifically whether it genuinely supports multiple model backends or subtly favors NVIDIA-optimized ones. What I do know is that Jensen Huang is expected to announce this formally at GTC on March 16. The keynote description already covers "open models, agentic systems, and physical AI." NVIDIA stock is up nearly 2% on the news. For investors: the hardware-agnostic framing is either a confident hedge against AMD and custom silicon from the hyperscalers, or it is a tell that NVIDIA knows its moat is more contested than its market cap implies. Probably both.