Configure Grok integration in OpenClaw using xAI API keys and usage-based billing.

Setup guide for integrating Grok into OpenClaw via xAI API endpoint configuration and token-based cost management.

📅 2026/04/19

Explore Deploy & Ops style OpenClaw playbooks

Setup guide for integrating Grok into OpenClaw via xAI API endpoint configuration and token-based cost management.

📅 2026/04/19

One-click AI deployment workflow bypassing GPU procurement and rebranding.

📅 2026/04/18

Announcement of OpenClaw's status as a historic open source milestone and its evolution into a fully autonomous AI agent deployed by NVIDIA.

📅 2026/04/18

Implementation of recursion capping, idempotency keys, and event-driven notifications to prevent agent workflow failures and reduce costs.

📅 2026/04/18

Global installation of a social media sync agent skill for MCP frameworks.

📅 2026/04/18

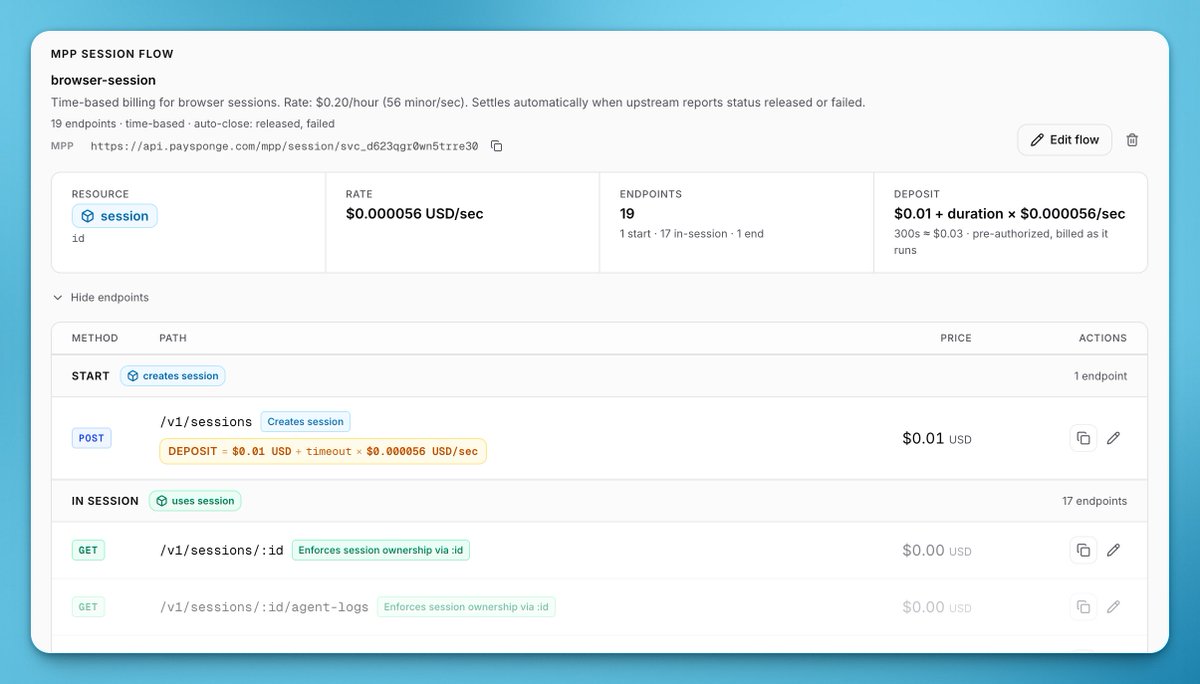

Configuring dynamic pricing models (request, usage, time-based) for AI agents using Sponge Gateway and MCP protocols.

📅 2026/04/18

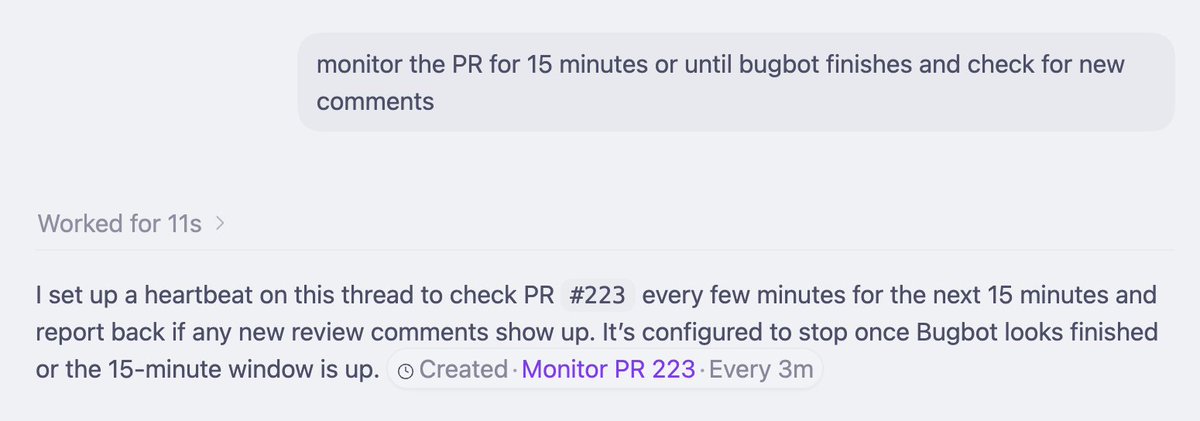

Automated heartbeat configuration in Codex using natural language prompts via OpenClaw.

📅 2026/04/18

Migrating OpenClaw to a Docker-based VPS on Hostinger to optimize costs and ensure stability after failed AWS trials.

📅 2026/04/17

Configuring device security policies to intercept and handle OpenClaw root access requests.

📅 2026/04/17

Setting up an AI agent with SSH access and integrating the MiniMax model for automated development tasks.

📅 2026/04/16

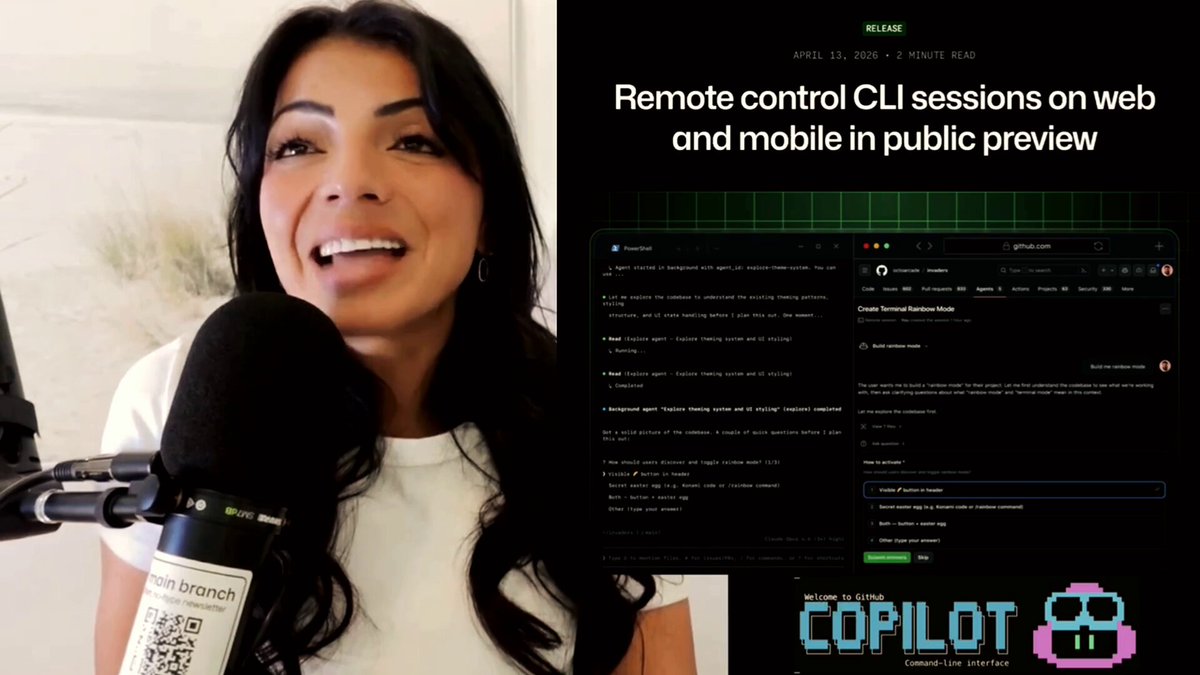

Remote monitoring of automation workflows across continents using CLI keepalive tools.

📅 2026/04/16

Deployment of a standalone binary for managing multi-agent AI systems.

📅 2026/04/16

Showing 1 - 12 of 277 items