Ollama integrates Apple's MLX framework to maximize local LLM performance on Apple Silicon for agent

Deploy & Ops📅 2026/03/31

#Deployment#Developer#Low Risk#macOS#Manual Trigger#Semi-Automatic#代码仓库#性能优化#报告#生产中

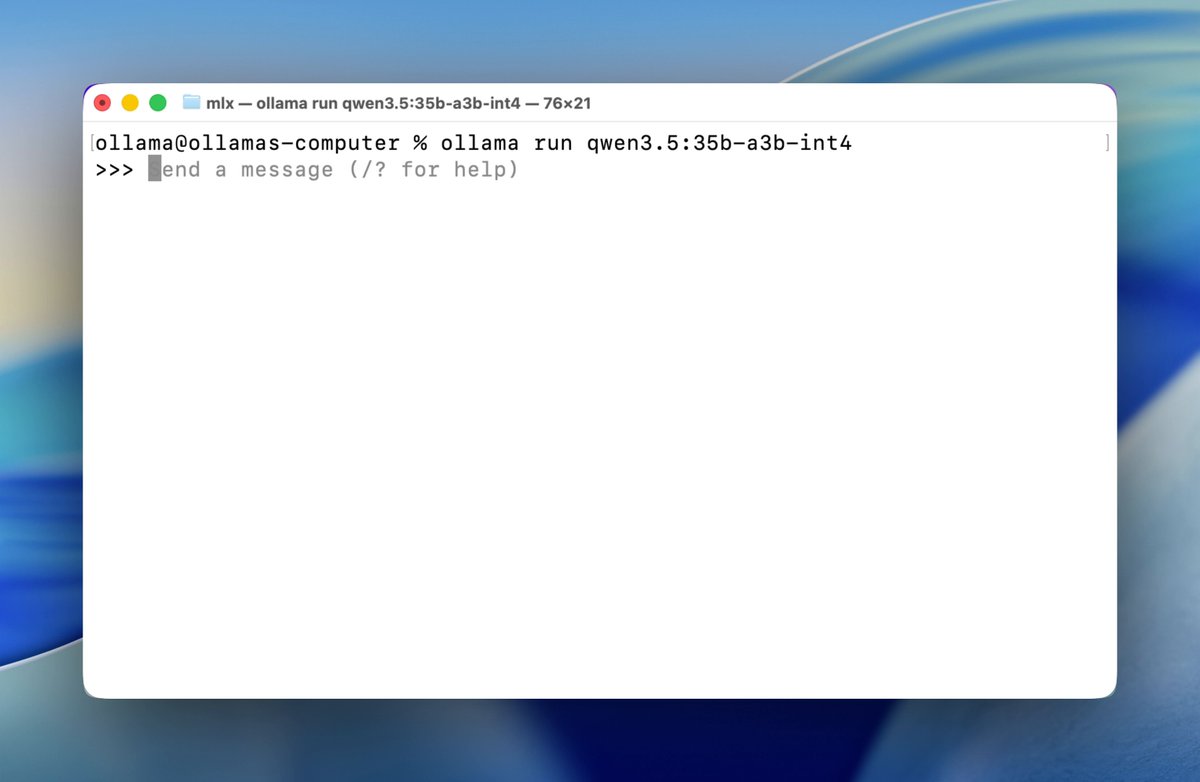

Ollama is now updated to run the fastest on Apple silicon, powered by MLX, Apple's machine learning framework. This change unlocks much faster performance to accelerate demanding work on macOS: - Personal assistants like OpenClaw - Coding agents like Claude Code, OpenCode, or Codex