Compares OpenClaw and Claude Cowork performance on browser automation tasks highlighting cost and se

Testing & Debug📅 2026/03/26

#API#Browser#Developer#Medium Risk#Reusable#Semi-Automatic#代码仓库#成本优化#报告#测试

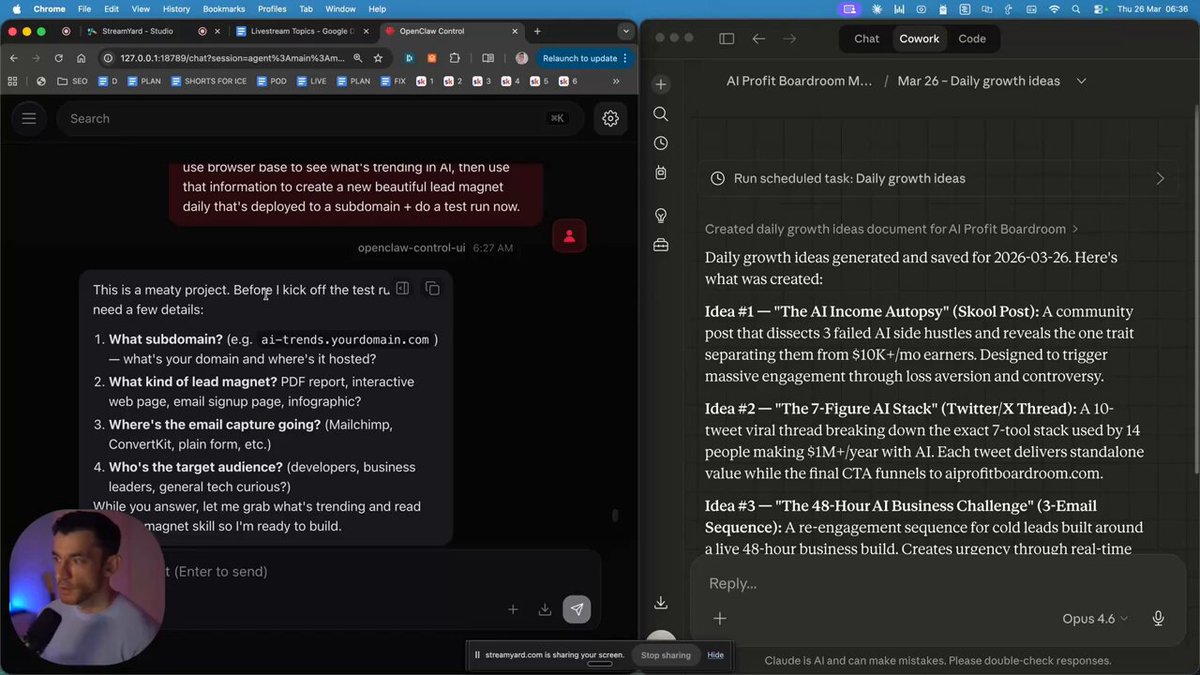

This guy ran OpenClaw and Claude Cowork side by side. Same task. Same prompt. OpenClaw refused to do it. Claude Cowork opened Chrome and got it done. Here's every difference that matters: → Browser use: Claude Cowork wins every time → API cost: Cowork is a flat monthly fee. OpenClaw burned $38 in one day on Opus tokens → Projects: Cowork keeps everything organized. OpenClaw has no real separation → Setup: Cowork needs zero technical skills. OpenClaw needs API tokens, docs, and patience → Phone connection: Cowork uses a QR code. OpenClaw needs a bot token, API key, and pairing code OpenClaw wins on one thing — model flexibility. You can test any new AI the day it drops. But 99% of people don't need that. They need something that works the first time, every time. That's Cowork.